As artificial intelligence systems grow more complex, the challenge is no longer just building accurate models—it is managing the intricate processes that bring those models into production. Data ingestion, transformation, training, validation, deployment, monitoring, and retraining must work together reliably and repeatedly. This is where AI workflow orchestration platforms such as Prefect play a crucial role. They provide structure, observability, and automation for data and machine learning pipelines, enabling organizations to scale AI initiatives with confidence.

TLDR: AI workflow orchestration platforms like Prefect automate and manage complex data and machine learning pipelines. They improve reliability, visibility, and scalability by coordinating tasks, handling failures, and monitoring workflows in real time. These platforms help teams move from experimental notebooks to robust production systems. As AI adoption grows, orchestration becomes a foundational layer of modern data infrastructure.

Without orchestration, AI workflows are often stitched together with ad hoc scripts, cron jobs, and manual interventions. While such setups may function in early experimentation stages, they rarely withstand production demands. Errors become difficult to trace, dependencies become fragile, and scaling operations introduce exponential complexity. A structured orchestration platform solves these issues by turning scattered processes into coherent, manageable workflows.

The Growing Complexity of AI Pipelines

Modern AI systems typically involve multiple interconnected stages:

- Data ingestion from APIs, databases, IoT devices, or third-party services

- Data cleaning and transformation using frameworks such as Pandas or Spark

- Feature engineering and dataset versioning

- Model training across distributed compute environments

- Evaluation and validation against performance metrics

- Deployment into production environments

- Monitoring and retraining to combat data drift

Each stage depends on others. When one task fails or produces inconsistent results, the entire pipeline can break. Orchestration platforms such as Prefect are designed to manage these interdependencies with precision and resilience.

Prefect, in particular, approaches workflows as directed graphs of tasks. Developers define tasks in Python, specify dependencies, and allow the platform to manage execution logic. This separation of business logic from execution infrastructure creates cleaner, more maintainable code bases.

What Makes Orchestration Platforms Essential

AI orchestration tools are not merely schedulers. They provide advanced capabilities that go far beyond running scripts at set intervals. Among the most important features are:

- Dependency Management: Tasks execute only when prerequisites are satisfied.

- Failure Handling: Automatic retries, alerts, and fallback logic.

- Observability: Real-time logs, metrics, and execution visibility.

- Scalability: Seamless execution across distributed infrastructure.

- Parameterization: Dynamic workflows triggered by external events.

In traditional setups, these capabilities must be manually engineered. With platforms like Prefect, they are built in, reducing operational overhead and improving maintainability.

Reliability is arguably the most critical benefit. AI models are only as trustworthy as the pipelines that generate and maintain them. If training data updates fail silently or deployment scripts misfire, downstream decisions can be compromised. Orchestration ensures transparency and accountability across every step.

Prefect’s Design Philosophy

Prefect distinguishes itself by emphasizing a developer-first experience. Workflows are written in standard Python, not in proprietary configuration languages. This design allows data scientists and engineers to leverage familiar tools without steep learning curves.

Core architectural components typically include:

- Flows: Represent complete workflows composed of interconnected tasks.

- Tasks: Discrete units of work such as data transformation or model training.

- Agents: Workers that execute flows in specific environments.

- Orion Server/Cloud Backend: Handles coordination and monitoring.

This architecture provides both local development flexibility and cloud-scale orchestration. Teams can prototype on laptops and deploy seamlessly into Kubernetes clusters or cloud environments.

Automation Across the AI Lifecycle

AI workflow orchestration is valuable across three primary phases of the model lifecycle: development, production, and maintenance.

1. Development Phase

During experimentation, data scientists iterate rapidly. Orchestration tools enable reproducible experiments by:

- Tracking parameters and configurations

- Scheduling hyperparameter searches

- Managing dataset versions

- Ensuring consistent preprocessing steps

This reduces inconsistencies between experimental notebooks and production pipelines.

2. Production Deployment

Once models are validated, deploying them involves coordinated actions. An orchestration platform can automatically:

- Trigger training when new data arrives

- Validate model accuracy before promotion

- Deploy models via APIs or batch scoring systems

- Rollback deployments if thresholds are not met

This ensures that production models remain stable and aligned with quality standards.

3. Monitoring and Retraining

Production AI systems must handle changing data distributions. Workflow orchestration supports:

- Scheduled performance monitoring

- Drift detection tasks

- Automated retraining flows

- Alerting and reporting mechanisms

Instead of relying on manual oversight, organizations can implement continuous learning frameworks.

Comparisons With Traditional Workflow Tools

Before specialized orchestration platforms gained traction, teams often used general-purpose schedulers or CI/CD systems. While useful, these tools were not optimized for data-intensive and state-dependent tasks common in AI pipelines.

Key differences include:

- Data Awareness: AI orchestration platforms understand task states and metadata.

- Dynamic Workflows: Flows can adapt at runtime based on outputs.

- Task Mapping: Parallelization across datasets or parameter sets.

- Rich Observability: Specialized dashboards for pipeline tracing.

Prefect, for example, allows conditional logic within flows, enabling dynamic branching based on intermediate results. This flexibility supports advanced machine learning processes such as adaptive model selection.

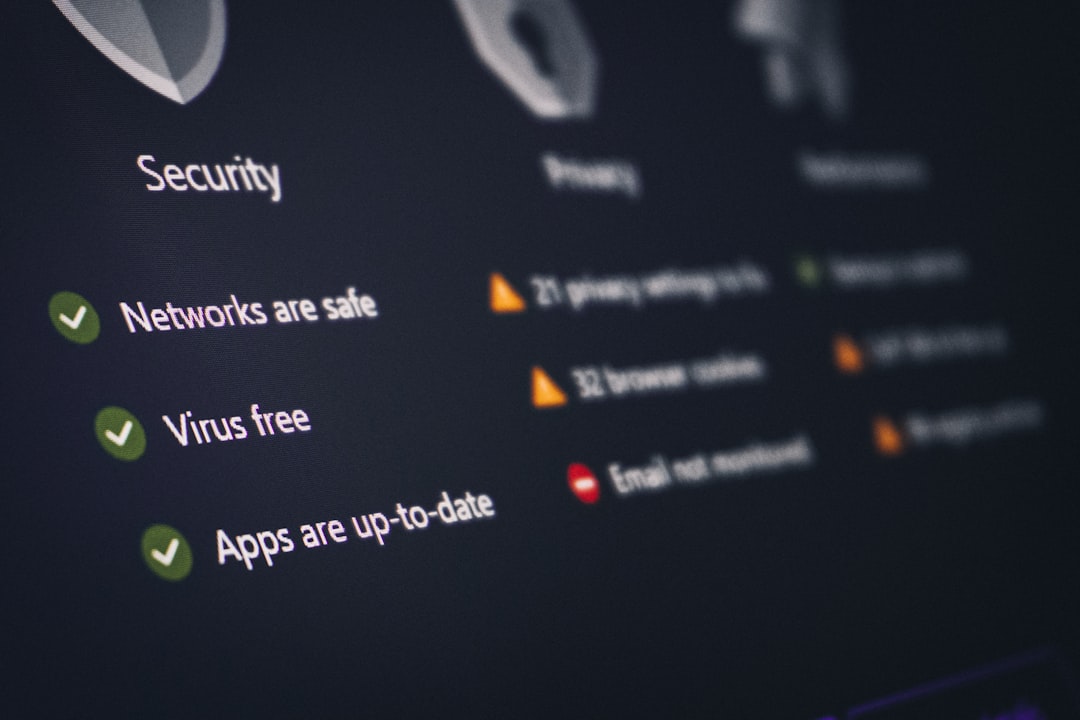

Security and Governance Considerations

As AI pipelines interact with sensitive data, governance becomes paramount. Enterprise-grade orchestration platforms address security through:

- Role-based access control

- Secure secret management

- Encrypted communication channels

- Audit logging and activity tracking

By establishing centralized control mechanisms, organizations can enforce compliance policies and regulatory standards more easily than with decentralized scripts.

Operational Efficiency and Cost Management

One of the less discussed yet critical benefits of workflow orchestration is cost control. Cloud resources, especially GPUs, are expensive. Inefficient job scheduling or idle infrastructure can significantly inflate operational expenses.

Prefect and similar platforms optimize costs by:

- Triggering compute resources only when needed

- Scaling workloads automatically based on demand

- Stopping failed tasks early to prevent resource waste

- Providing visibility into execution metrics

This level of oversight enables data teams to align operational efficiency with financial accountability.

Organizational Impact

Adopting an AI workflow orchestration platform often signals a broader cultural shift. Organizations transition from experimental AI initiatives to systematic, production-grade operations. Benefits extend beyond technical improvements:

- Cross-team collaboration: Clear workflow definitions enhance communication.

- Standardization: Shared templates and reusable components.

- Reduced onboarding time: New engineers can understand structured flows quickly.

- Greater stakeholder trust: Transparent monitoring builds confidence in AI systems.

When workflows are well-documented and observable, business units gain visibility into how AI outputs are generated. This transparency fosters greater acceptance and strategic alignment.

Challenges and Best Practices

Despite their advantages, orchestration platforms require thoughtful implementation. Common challenges include over-engineering simple processes or creating overly complex task graphs.

Best practices include:

- Start with clear pipeline definitions before introducing orchestration layers.

- Modularize tasks to encourage reuse and clarity.

- Implement monitoring from the outset rather than retrofitting later.

- Regularly review workflow performance to identify bottlenecks.

A disciplined approach ensures that orchestration enhances agility rather than introducing unnecessary overhead.

The Future of AI Workflow Orchestration

As AI systems increasingly integrate with real-time applications, edge computing, and autonomous agents, orchestration platforms will evolve accordingly. Expect deeper integration with event-driven architectures, improved lineage tracking, and tighter alignment with model governance frameworks.

Moreover, as regulatory scrutiny intensifies, automated compliance reporting embedded within workflow systems will likely become standard. Orchestration will not simply manage tasks—it will document accountability at every step.

Ultimately, AI workflow orchestration platforms like Prefect represent a maturation of the field. They embody the recognition that machine learning success depends not only on algorithms but on robust operational infrastructure. By automating pipelines, managing complexity, and ensuring reliability, these platforms enable organizations to transform experimental models into dependable, scalable AI systems.

In a landscape where AI drives critical business decisions, workflow orchestration is no longer optional. It is a strategic necessity that underpins sustainable innovation and operational excellence.