As organizations increasingly rely on machine learning models to drive critical decisions, the need for robust monitoring has shifted from a luxury to a necessity. Models that perform well in development can silently degrade in production, leading to inaccurate predictions, financial losses, or regulatory risks. AI model monitoring tools such as Arize AI have emerged to address this growing challenge by tracking model health, data quality, and performance over time.

TL;DR: AI model monitoring tools like Arize AI help organizations detect and respond to model drift, data quality issues, and performance degradation in real time. They provide visibility into production machine learning systems through metrics, alerts, and explainability tools. By continuously tracking inputs, outputs, and prediction outcomes, these platforms reduce risk and improve reliability. Effective monitoring is now a core component of responsible and scalable AI deployment.

Why Model Monitoring Matters in Production

When a machine learning model is trained, it learns patterns from a specific dataset at a specific point in time. However, real-world environments are dynamic. Customer preferences shift, market conditions fluctuate, and user behaviors evolve. As a result, the statistical properties of new data can differ from the training data, leading to what is known as model drift.

Without effective monitoring, drift can go unnoticed for months. This silent performance degradation can affect systems such as:

- Fraud detection models missing new fraud patterns

- Recommendation engines suggesting irrelevant products

- Healthcare prediction systems providing outdated risk assessments

- Credit scoring models generating biased or inaccurate decisions

AI monitoring platforms like Arize AI provide continuous visibility into these deployed systems. Instead of relying solely on offline evaluation, teams gain real-time insight into how models behave in production environments.

Understanding Model Drift

Model drift occurs when a trained model’s predictive performance declines due to changes in data patterns or relationships. There are several key types of drift that monitoring tools are designed to detect:

1. Data Drift

Data drift refers to changes in the distribution of input features over time. For example, if an e-commerce platform starts attracting a different demographic group, the input variables feeding a recommendation engine may shift significantly.

2. Concept Drift

Concept drift happens when the relationship between inputs and outputs changes. For instance, economic downturns may alter how financial indicators correlate with consumer default risk.

3. Prediction Drift

Even if input data appears stable, the output predictions of a model may change unexpectedly. Monitoring predicted class distributions or probability scores can highlight hidden instability.

Comprehensive monitoring tools track all three forms of drift simultaneously, enabling teams to pinpoint the root cause of performance issues.

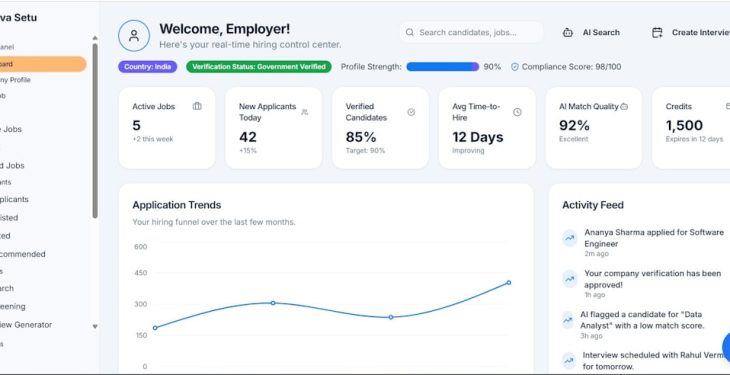

Core Capabilities of AI Monitoring Tools Like Arize AI

Modern AI observability platforms are designed to function similarly to application performance monitoring tools, but specifically for machine learning systems. Their core capabilities often include the following:

Real-Time Data Ingestion

Monitoring tools integrate directly with production pipelines to ingest model inputs, outputs, and metadata. This allows for continuous tracking of statistical patterns.

Automated Drift Detection

Statistical tests and distance metrics compare live production data to baseline training datasets. Significant deviations trigger alerts, enabling rapid response.

Performance Monitoring

When ground truth labels become available, platforms calculate performance metrics such as accuracy, F1 score, precision, recall, or regression error rates.

Explainability and Root Cause Analysis

Monitoring does not stop at detection. Tools like Arize AI also include explainability dashboards that help teams understand which features are contributing most to drift or prediction changes.

Alerting and Workflow Integration

Configurable alerts notify data scientists and engineers when thresholds are breached. Integration with messaging systems ensures that action can be taken immediately.

The Architecture Behind AI Observability

Behind the scenes, AI monitoring platforms rely on scalable architectures capable of handling large volumes of streaming data.

A typical monitoring architecture includes:

- Data collectors embedded in production systems

- Centralized storage for input features and predictions

- Statistical engines calculating drift and performance metrics

- Visualization dashboards for analytics and reporting

Scalability is critical. Enterprise organizations may generate millions of predictions per hour, requiring monitoring platforms to maintain high throughput and low latency.

Benefits of Using Tools Like Arize AI

Organizations that implement AI model monitoring solutions gain several strategic advantages:

Reduced Operational Risk

Early drift detection prevents costly downstream errors. For regulated industries, it also helps maintain compliance with model governance standards.

Improved Model Lifespan

Continuous observability enables proactive retraining decisions. Instead of waiting for catastrophic failure, teams can retrain models at optimal intervals.

Faster Debugging

Centralized dashboards provide granular insight into feature behavior, reducing time spent investigating performance anomalies.

Increased Stakeholder Trust

When organizations can demonstrate clear tracking and accountability of AI behavior, executives and regulators gain confidence in automated systems.

Use Cases Across Industries

The need for AI monitoring spans multiple sectors:

- Financial Services: Detecting drift in credit underwriting or fraud detection models.

- Healthcare: Ensuring predictive models remain accurate as patient populations change.

- Retail and E-commerce: Monitoring recommendation systems and demand forecasting models.

- Technology Platforms: Tracking search ranking, content personalization, and ad targeting models.

In each case, even small drops in performance can translate into significant financial or operational consequences.

Best Practices for Implementing Model Monitoring

Simply deploying a monitoring tool is not enough. Effective implementation requires strategic planning:

- Define Clear Baselines: Establish reference datasets and performance benchmarks at deployment.

- Monitor Both Data and Predictions: Tracking only accuracy is insufficient without feature-level visibility.

- Set Meaningful Alert Thresholds: Avoid alert fatigue by focusing on material deviations.

- Align Monitoring With Retraining Pipelines: Automate retraining triggers when thresholds are met.

- Document Governance Processes: Maintain audit trails for compliance and transparency.

Organizations that treat monitoring as a fundamental part of the machine learning lifecycle—rather than an afterthought—tend to achieve greater long-term model stability.

The Future of AI Model Observability

As AI systems grow more complex, monitoring tools are evolving accordingly. Future capabilities are likely to include:

- Deeper integration with large language models and generative AI systems

- Automated root cause analysis using meta-modeling techniques

- Continuous evaluation of fairness and bias metrics

- Enhanced anomaly detection for unstructured data inputs

In addition, regulatory frameworks worldwide are placing greater emphasis on AI accountability. Observability platforms will play a foundational role in helping organizations meet transparency and risk management requirements.

Tools like Arize AI signal a broader industry shift: machine learning is no longer just about building accurate models—it is about maintaining reliable systems over time.

FAQ: AI Model Monitoring and Arize AI

1. What is model drift?

Model drift refers to the degradation in a machine learning model’s performance due to changes in data distributions or relationships between inputs and outputs over time.

2. How does Arize AI detect drift?

Arize AI uses statistical comparison techniques to measure differences between training data and live production data. It identifies significant deviations and triggers alerts when thresholds are exceeded.

3. Is model monitoring necessary for all machine learning systems?

Any model operating in a dynamic environment or influencing critical decisions benefits from monitoring. The higher the risk associated with incorrect predictions, the more essential monitoring becomes.

4. Can monitoring tools improve model accuracy?

Monitoring tools do not directly improve accuracy, but they provide the insights needed to retrain or adjust models before significant degradation occurs.

5. How often should models be retrained?

Retraining frequency depends on data volatility and business requirements. Monitoring tools help determine optimal retraining intervals based on detected drift and performance metrics.

6. Are AI monitoring tools only for large enterprises?

While enterprise-scale systems benefit significantly, smaller organizations deploying production models can also use monitoring tools to minimize risk and maintain long-term model reliability.